Ever since Google launched AI Mode, I’ve had two questions on my thoughts:

- How will we guarantee our content material will get proven in AI outcomes?

- How will we determine what works when AI search continues to be largely a thriller?

Whereas there’s lots of recommendation on-line, a lot of it’s speculative at greatest. Everybody has hypotheses about AI optimization, however few are operating precise experiments to see what works.

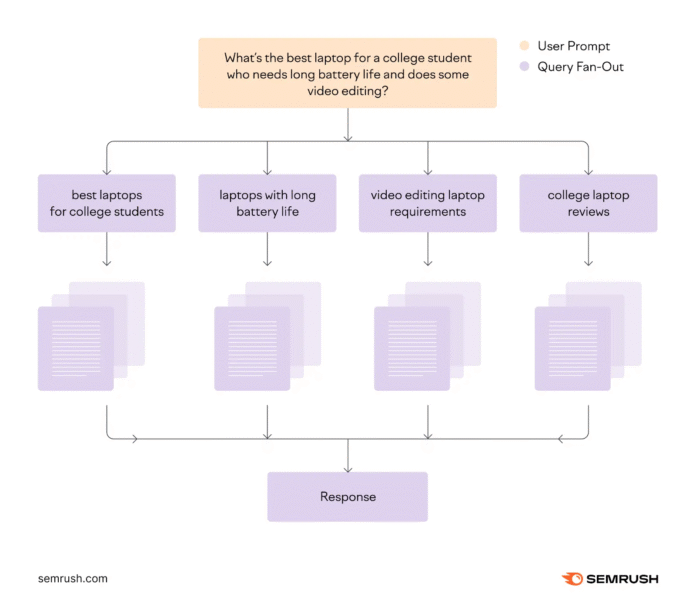

One thought is optimizing for question fan-out. Question fan-out is a course of the place AI programs (notably Google AI Mode and ChatGPT search) take your authentic search question and break it down into a number of sub-queries, then collect info from numerous sources to construct a complete response.

This illustration completely depicts the question fan-out course of.

The optimization technique is easy: Determine the sub-queries round a selected subject after which make sure that your web page contains content material concentrating on these queries. If you happen to try this, you’ve got higher odds of being chosen in AI solutions (no less than in idea).

So, I made a decision to run a small check to see if this really works. I chosen 4 articles from our weblog, had them up to date by a crew member to handle related fan-out queries, and tracked our AI visibility for one month.

The outcomes? Nicely, they reveal some attention-grabbing insights about AI optimization.

Listed here are the important thing takeaways from our experiment:

Key Takeaways

- Optimizing for fan-out queries considerably will increase AI citations: In our small pattern of 4 articles, we greater than doubled citations in tracked prompts from two to 5. Whereas absolutely the numbers are small given the pattern dimension, citations have been the primary metric we aimed to affect, and the rise is directionally indicative of success.

- AI citations could be unpredictable: I checked in periodically through the month, and at one level, our citations went as excessive as 9 earlier than dropping again down to 5. There have been reviews of ChatGPT drastically decreasing citations for manufacturers and publishers throughout the board. It simply reveals how shortly issues can change while you’re counting on AI platforms for visibility.

- Our model mentions dropped for tracked queries, and so did everybody else’s: General, we observed fewer model references showing in AI responses to the queries we have been monitoring. This affected our share of voice, model visibility, and whole point out metrics. Different manufacturers additionally skilled comparable drops. This seems to be a definite situation from quotation modifications—extra about how AI platforms dealt with model mentions throughout our experiment interval.

We’ll talk about the outcomes of this experiment intimately later within the article. First, let me stroll you thru precisely how we carried out this experiment, so you possibly can perceive our methodology and probably replicate or enhance upon our strategy.

How We Ran the Question Fan-Out Experiment

Right here’s how we arrange and ran our experiment:

- I chosen 4 articles from our weblog

- For every chosen article, I researched 10 to twenty fan-out queries

- I partnered with Tushar Pol, a Senior Content material Author on our crew, to assist me execute the content material modifications for this experiment. He edited the content material in our articles to handle as many fan-out queries as doable.

- I arrange monitoring for the fan-out queries so we may measure earlier than and after AI visibility. I used the Semrush Enterprise AIO platform for this. We have been primarily desirous about seeing how our content material modifications impacted visibility in Google’s AI Mode, however our optimizations may additionally increase visibility on different platforms like ChatGPT Search as a aspect impact, so I tracked efficiency there as nicely.

Let’s take a better have a look at every of those steps.

1. Choosing Articles

I had particular standards in thoughts when deciding on the articles for this experiment.

First, I wished articles that had steady efficiency during the last couple of months. Site visitors has been unstable these days, and testing on unstable pages would make it unimaginable to inform whether or not any modifications in efficiency have been attributable to our modifications or simply regular fluctuations.

Second, I averted articles that have been core to our enterprise. This was an experiment, in spite of everything. If one thing went flawed, I did not wish to negatively have an effect on our visibility for vital matters.

After reviewing our content material library, I discovered 4 good candidates:

- A information on methods to create a advertising calendar

- An explainer on what subdomains are and the way they work

- A complete information on Google key phrase rankings

- An in depth walkthrough on methods to conduct technical search engine marketing audits

2. Researching Fan-Out Queries

Subsequent, I moved on to researching fan-out queries for every article.

There’s at the moment no method to know which fan-out queries (associated questions and follow-ups) Google will use when somebody interacts with AI Mode, since these are generated dynamically and may range with every search.

So, I needed to depend on artificial queries. These are AI-generated queries that approximate what Google may generate when folks search in AI Mode.

I made a decision to make use of two instruments to generate these queries.

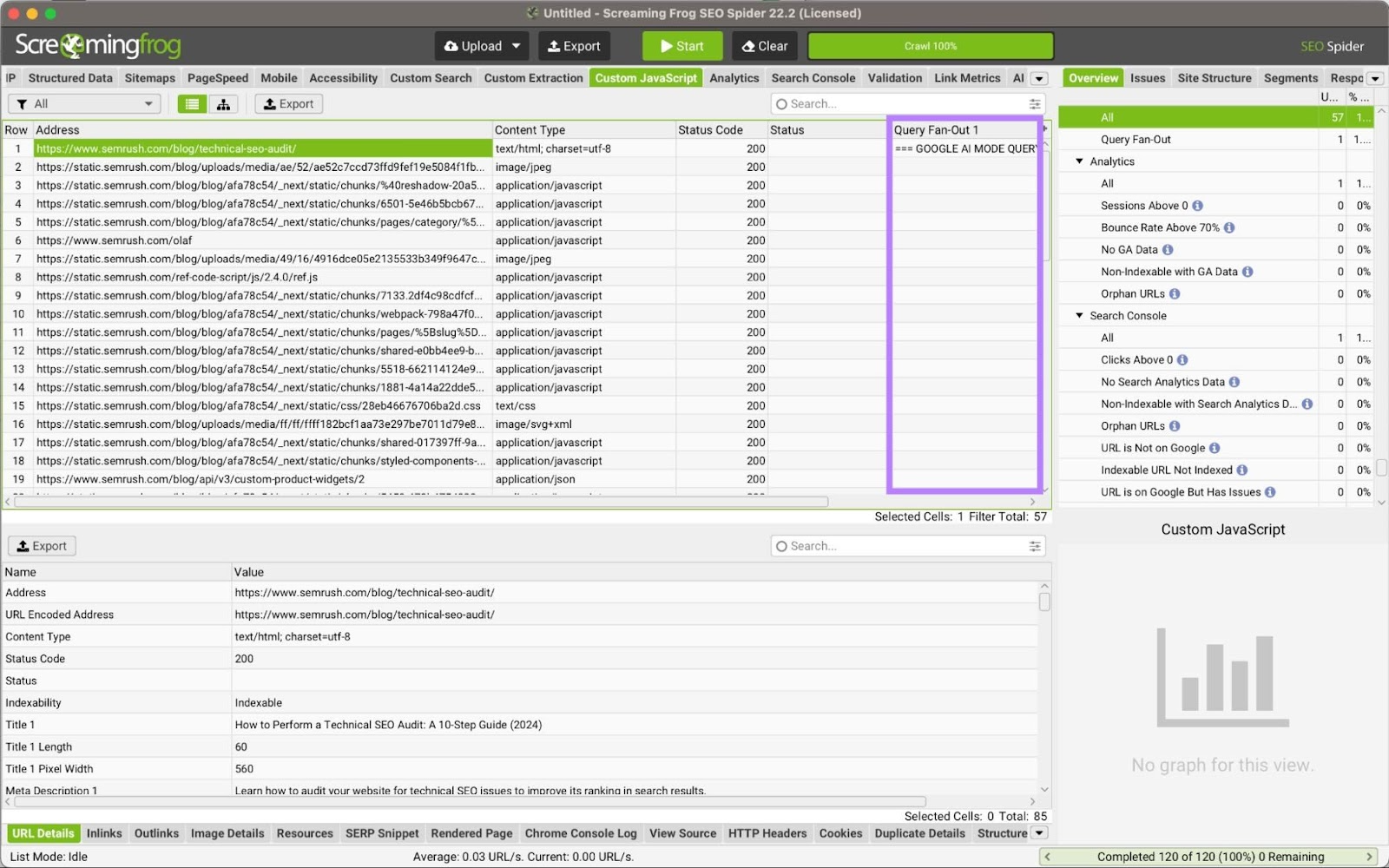

First, I used Screaming Frog. This instrument let me run a customized script towards every article. The script analyzes the web page content material, identifies the primary key phrase it targets, after which performs its personal model of question fan-out to counsel associated queries.

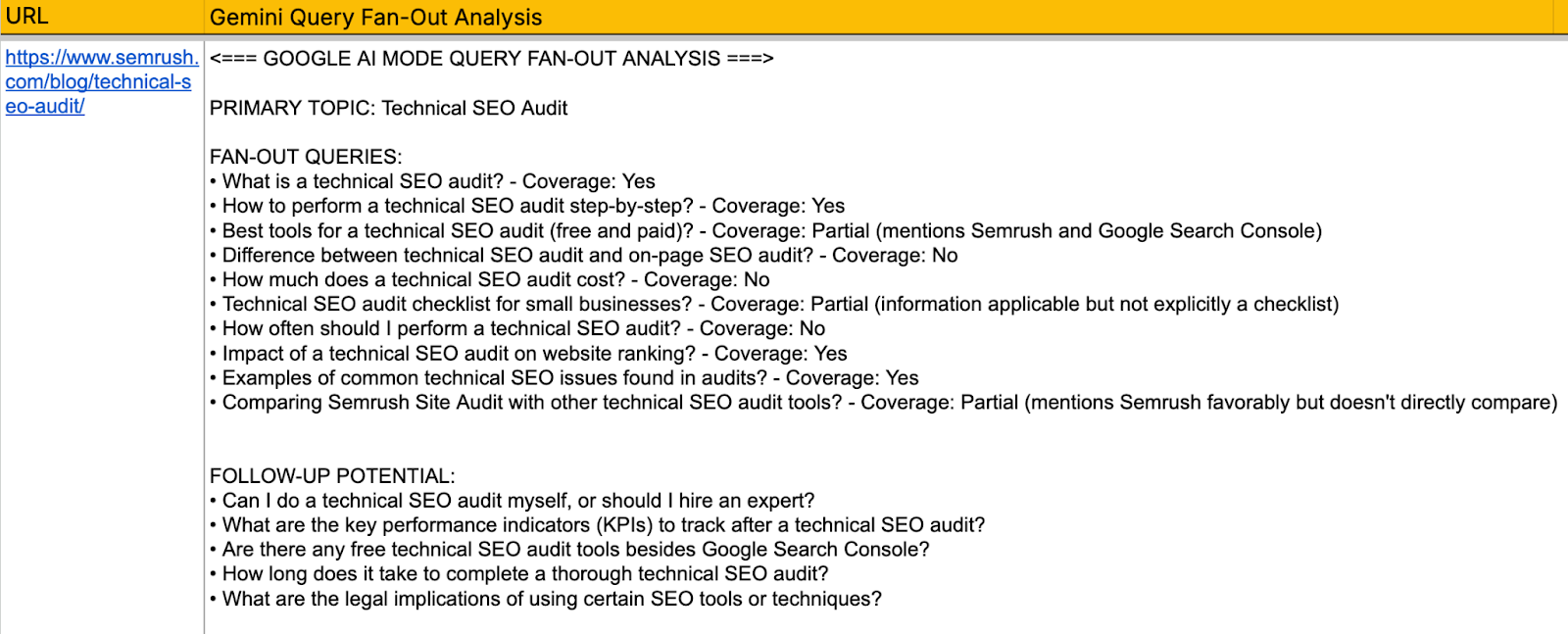

Sadly, the information isn’t correctly seen inside Screaming Frog—every little thing bought crammed right into a single cell. So, I needed to copy and paste the complete cell contents right into a separate Google Sheet.

Now I may really see the information.

The nice factor is that the script additionally checks whether or not our content material already addresses these queries. If some queries have been already addressed, we may skip them. But when there have been new queries, we wanted so as to add new content material for them.

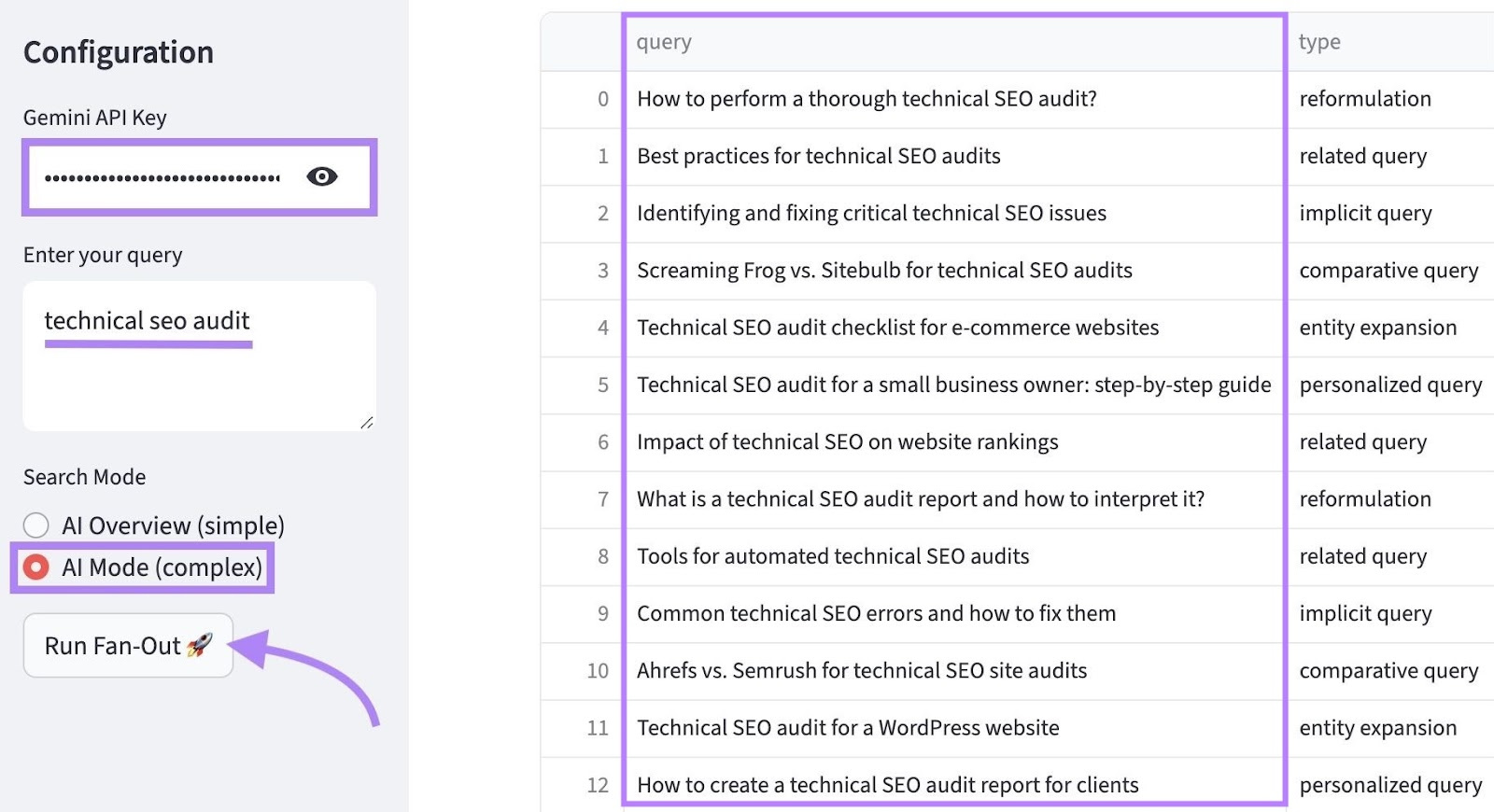

Subsequent, I used Qforia, a free instrument created by Mike King and his crew at iPullRank.

The explanation I used one other instrument is easy: Completely different instruments usually floor totally different queries. By casting a wider internet, I would have a extra complete listing of potential fan-out queries.

Plus, if sure queries are widespread throughout each instruments, that is a sign that addressing them could also be necessary.

The way in which Qforia works is easy: Enter the article’s essential key phrase within the given area, add a Gemini API key, choose the search mode (both Google AI Mode or AI Overview), and run the evaluation. The instrument will generate associated queries for you.

After operating the evaluation for every article, I saved the leads to the identical Google Sheet.

3. Updating the Articles

With a spreadsheet filled with fan-out queries, it was time to really replace our articles. That is the place Tushar stepped in.

My directions have been easy:

Test the fan-out queries for every article and tackle people who weren’t already coated and have been possible so as to add. If some queries felt like they have been past the article’s scope, it was OK to skip them and transfer on.

I additionally advised Tushar that together with the queries verbatim wasn’t all the time mandatory. So long as we have been answering the query posed by the question, the precise wording did not matter as a lot. The objective was ensuring our content material included what readers have been really in search of.

Generally, addressing a question meant making small tweaks—simply including a sentence or two to present content material. Different occasions, it required creating fully new sections.

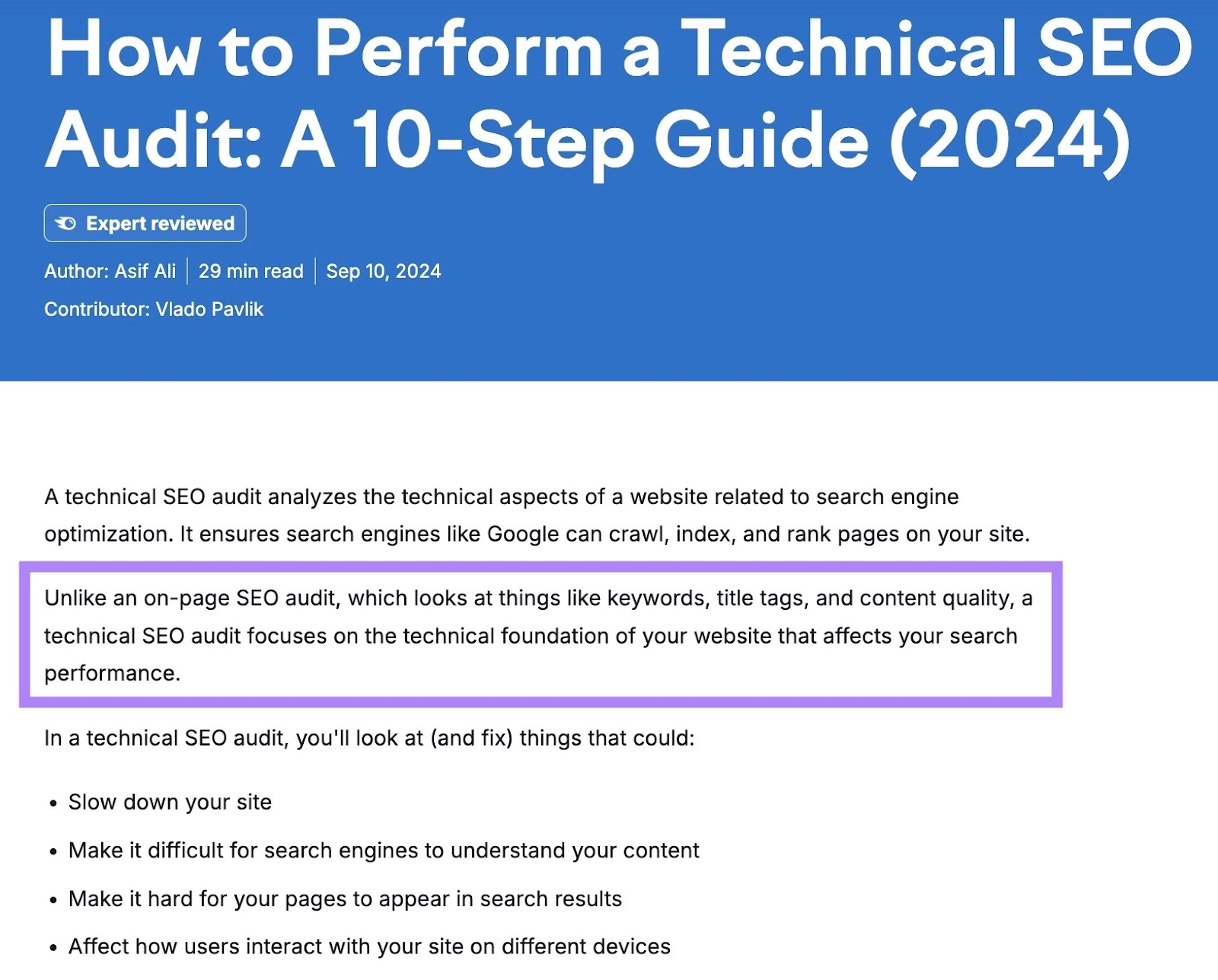

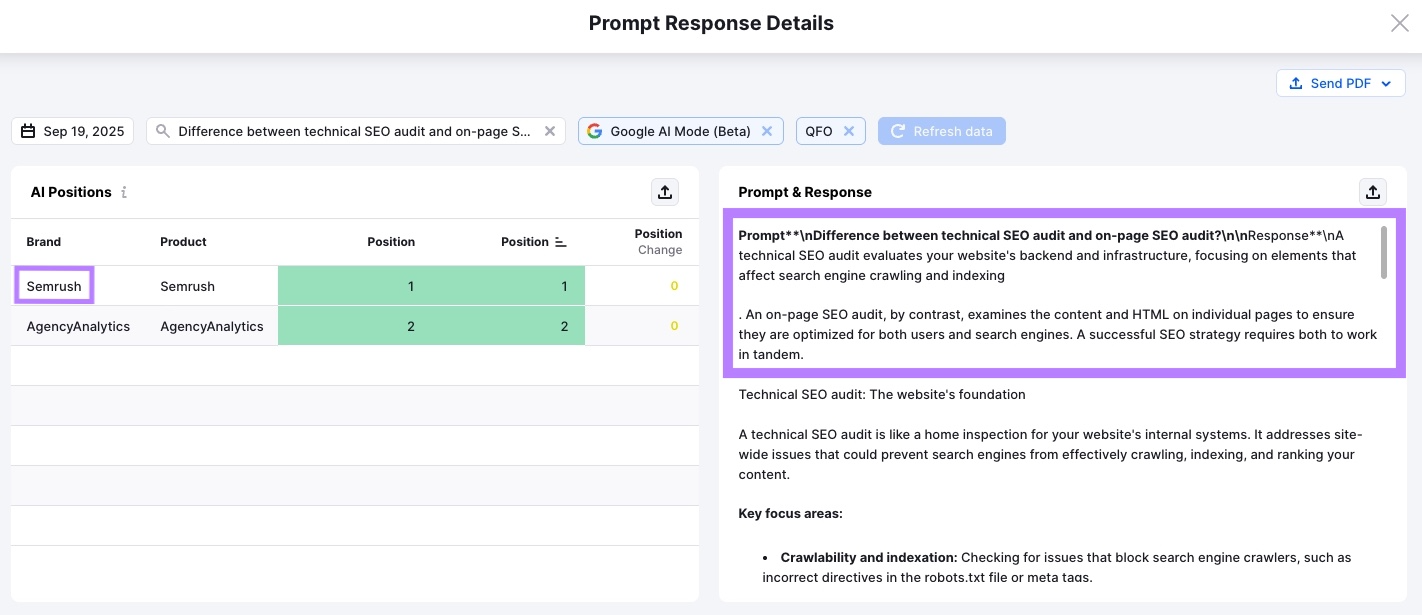

For instance, one of many fan-out queries for our article about doing a technical search engine marketing audit was: “distinction between technical search engine marketing audit and on-page search engine marketing audit.”

We may’ve addressed this question in some ways, however one sensible choice was to make a comparability proper after we outline what a technical search engine marketing audit is.

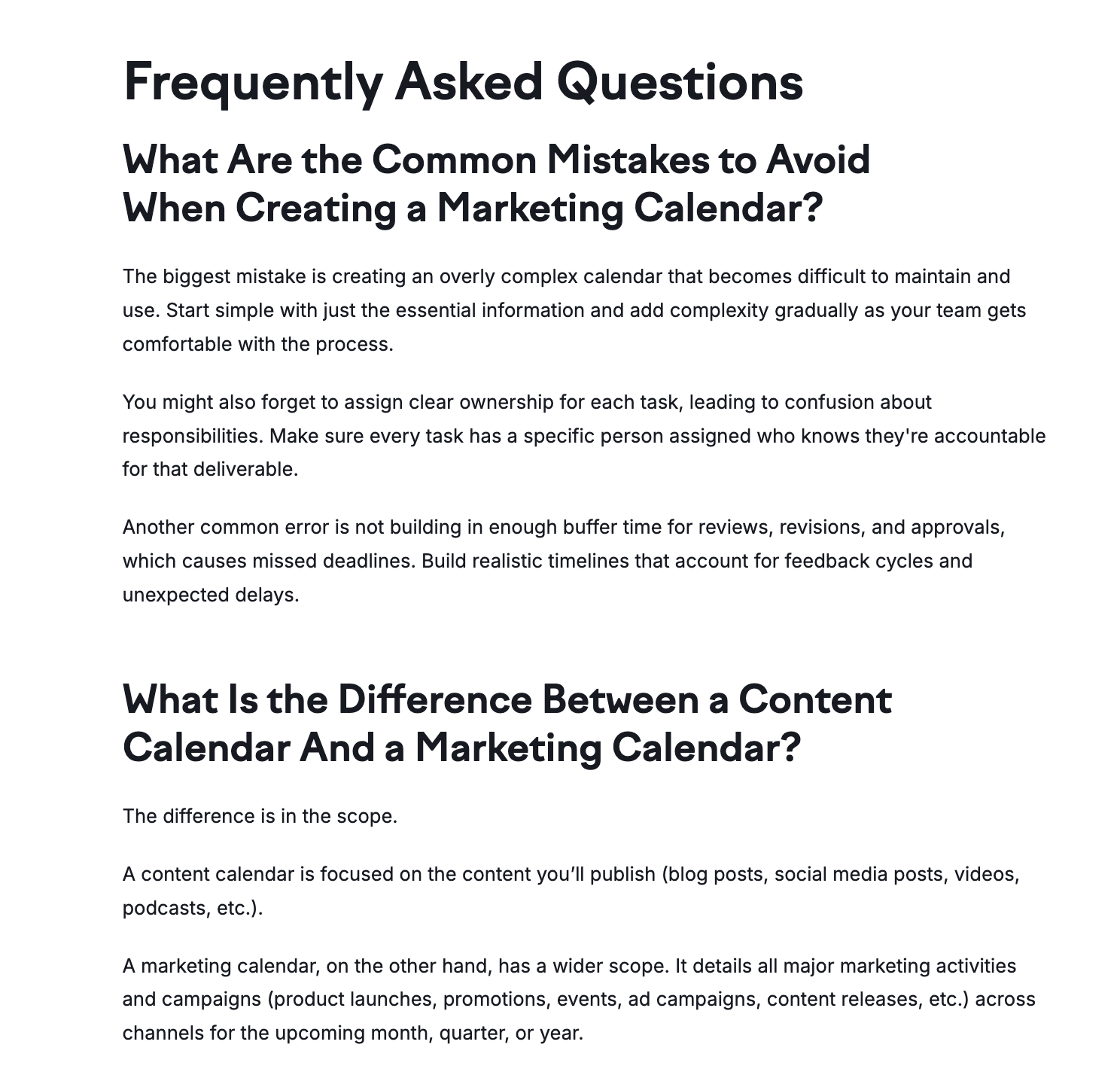

Generally, it wasn’t straightforward (and even doable) to combine queries naturally into the present content material. In these circumstances, we addressed them by creating a brand new FAQ part and overlaying a number of fan-out queries in that part.

Right here’s an instance:

Over the course of 1 week, we up to date all 4 articles from our listing. These articles did not undergo our customary editorial evaluate course of. We moved quick. However that was intentional, given this was an experiment and never a daily content material replace.

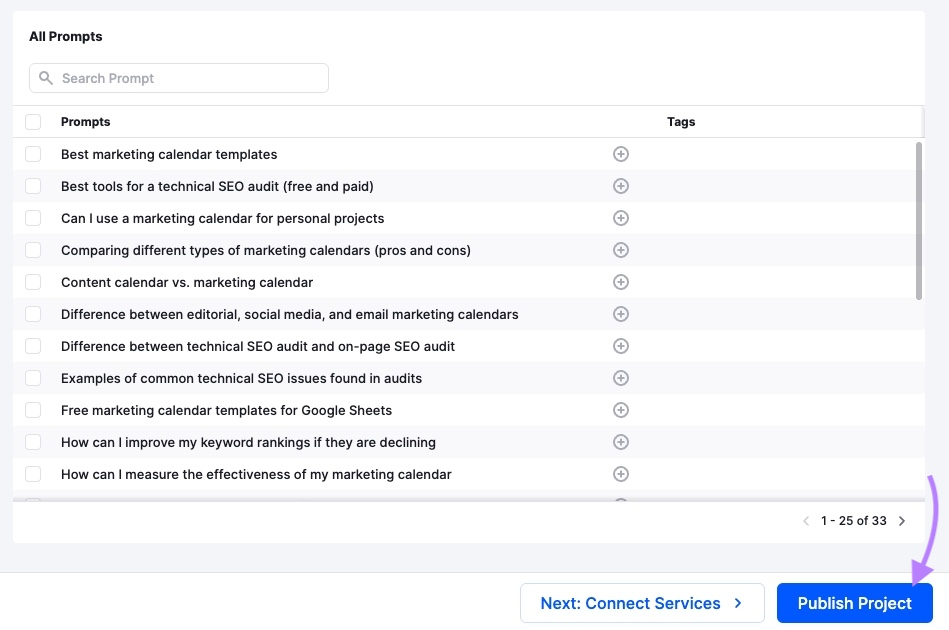

4. Setting Up Monitoring

Earlier than we pushed the updates reside, I recorded every article’s present efficiency to determine a baseline for comparability. This manner, we might be capable of inform if the question fan-out optimization really improved our AI visibility.

I used our Enterprise AIO platform to trace the outcomes. I created a brand new mission within the instrument and plugged in all of the queries we have been concentrating on. The instrument then started measuring our present visibility in Google AI Mode and ChatGPT.

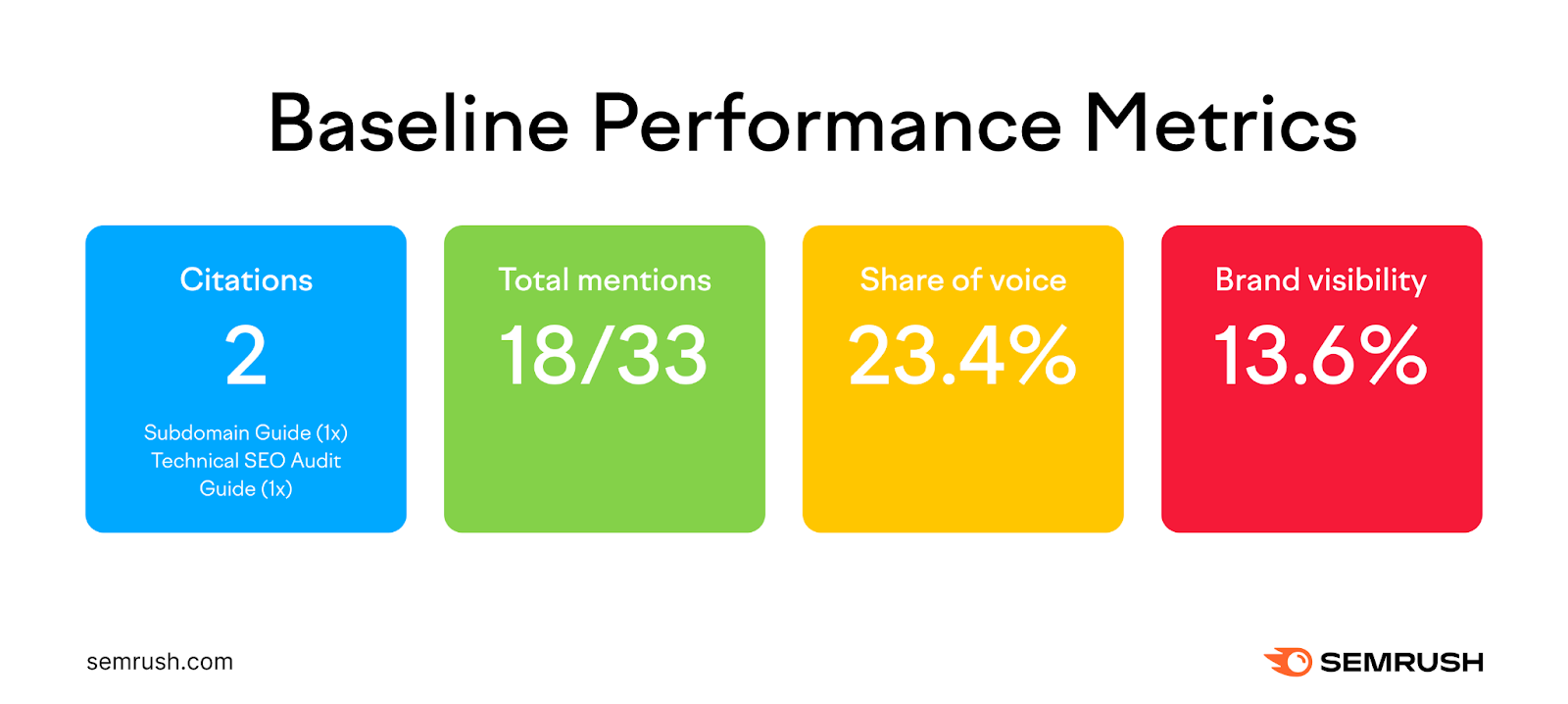

Right here’s what efficiency appeared like in the beginning of this experiment:

- Citations: This measures what number of occasions our pages have been cited in AI responses. Initially, solely two out of our 4 articles have been getting cited no less than as soon as.

- Whole mentions: This metric reveals the ratio of queries for which our model was instantly talked about within the AI response. That ratio was 18/33—which means out of 33 tracked queries, we have been being talked about for 18 queries.

- Share of voice: This can be a weighted metric that considers each model place and point out frequency throughout tracked AI queries. Our rating was 23.4%, which indicated we have been current in some responses however not all or within the lead positions.

- Model visibility: This advised us what proportion of immediate responses talked about our model no less than as soon as, whatever the place.

I made a decision to attend one month earlier than logging metrics once more. Then, it was time to conclude our experiment.

The Outcomes: What We Realized About Question Fan-Out Optimization

The outcomes have been truthfully a blended bag.

First off, some excellent news: our whole citations elevated.

Our 4 articles went from being cited two occasions to 5 occasions—a 150% enhance. For instance, one of many edits we made to the technical search engine marketing article (which we confirmed earlier) bought used as a supply within the AI response.

Seeing our content material cited is precisely what we hoped for, so it is a win. (Regardless of the small pattern dimension.)

Apparently, our last outcomes may’ve been extra spectacular if we ended our experiment earlier. At one level, we bought to 9 citations, however then they decreased when ChatGPT considerably diminished citations for all manufacturers.

This simply reveals how unpredictable AI platforms could be, and that elements utterly exterior your management may affect your visibility.

However what concerning the different metrics we tracked?

Our share of voice went down from 23.4% to twenty.0%, model visibility fell from 13.6% to 10.6%, and our model mentions dropped from 18 to 10.

In keeping with our knowledge, we’re not the one ones who noticed declines in model metrics. Here is a chart displaying what number of manufacturers’ share of voice went down on the similar time.

This occurred as a result of AI platforms talked about fewer model names general when producing responses to our tracked queries. This was a very totally different situation from the quotation fluctuations I discussed earlier.

Contemplating the exterior elements, I imagine our optimization efforts carried out higher than the information reveals. We managed to extend our citations regardless of the issues working towards us.

So, now the query is:

Does Question Fan-Out Optimization Work?

Based mostly on what we discovered in our experiment, I would say sure—however with an enormous asterisk.

Question fan-out optimization may help you get extra citations, which is effective. Nevertheless it’s arduous to drive predictable development when issues are this unstable. Hold this in thoughts while you’re optimizing for AI.

If you happen to’re desirous about studying extra about AI search engine marketing, maintain a watch out for the brand new content material we often publish on our weblog. Listed here are some articles you need to take a look at subsequent: